A Calcutta Bengali’s Guide to the Various Schools of AI

Artificial Intelligence [AI, the attempt to make machines perform tasks that look intelligent when humans do them] has always looked less like one science and more like College Street after rain: crowded, argumentative, slightly electrified, and full of men insisting that their particular stall contains the one true book.

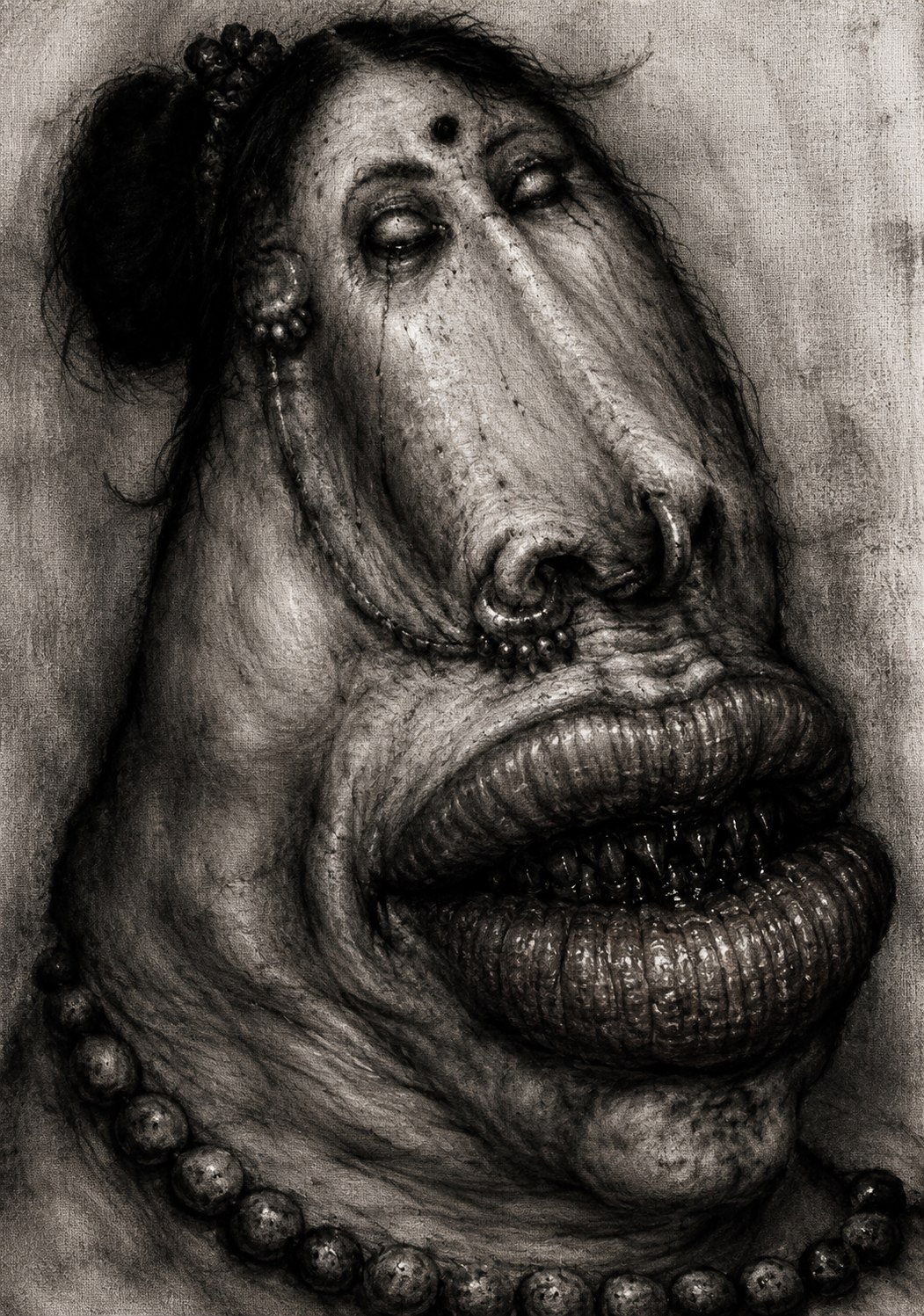

The first thing a Calcutta Bengali must understand about AI is that it is not a single royal road to machine intelligence. It is a para meeting that never adjourned. Each school arrived with its own theory of the mind, its own mathematics, its own sacred machinery, and its own private contempt for the others. The symbol people thought intelligence was logic wearing a dhoti. The neural people thought intelligence was pattern recognition sweating inside a giant numerical fish market. The Bayesians brought probability and the expression of a person who has just corrected your grammar in public. The evolutionary crowd threw solutions into computational mud and waited for the fittest little monster to crawl out. The reinforcement learners trained agents by reward and punishment, which is basically every Bengali childhood, minus the Horlicks.

The old symbolic school came first in spirit, though not in every detail of chronology. Its religion was that thought could be represented as symbols and rules. If humans reasoned with concepts like “person,” “disease,” “cause,” “uncle,” “loan,” “fish,” and “disaster,” then perhaps computers could manipulate symbols too. This became the world of logic programming, theorem proving, planning, search, knowledge representation, and Good Old-Fashioned Artificial Intelligence [GOFAI, a later nickname for rule-heavy symbolic AI]. In its proudest form, it imagined intelligence as a very large clerk’s office where every file was labeled correctly, every rule was explicit, and nothing important had been spilled on the paper.

The symbolic tribe gave us powerful ideas. Search. Planning. Formal reasoning. Expert rules. Ontologies. Frames. Production systems. These were not toys. They shaped compilers, theorem provers, diagnostic systems, scheduling engines, and knowledge-based systems. The trouble was not that symbols were stupid. The trouble was that the world is a disgraceful tenant. It refuses to keep its categories clean. A cat is a cat until it is a blurry cat in poor light, a toy cat, a dead cat, a metaphorical cat, a cat-like shadow, or someone’s WhatsApp display picture taken in 2014. Symbolic AI could reason beautifully once the representation was in place, but building and maintaining that representation turned out to be like trying to alphabetize a fish market during a power cut.

Then came the neural network crowd, whose central hunch was older than many people realize: maybe intelligence is not mainly rule-writing, but weight-adjusting. Instead of telling the system what every object means, give it examples and let it learn statistical structure. The perceptron suggested the idea early. Later, backpropagation made multilayer networks trainable in a practical way. Backpropagation is not mystical. It is a bookkeeping system for blame. The network makes an error, the error is pushed backward through the layers, and each internal weight receives a tiny moral lecture about how much it contributed to the stupidity.

The neural tribe’s charm is that it does not demand that humans hand-code all meaning in advance. Its scandal is that it often learns without telling you, in civilized language, what it has learned. A neural network is like a brilliant student from South Calcutta who solves the problem perfectly but refuses to show the working. You may admire the answer. You may fear the answer. You may not want this student approving mortgages, interpreting scans, or driving a bus through Shyambazar five-point crossing unless you understand the failure modes.

Deep learning is the neural school after eating a warehouse of processors. Deep Learning [DL, large multilayer neural network learning from data] took the old neural idea and made it enormous. More layers. More data. More compute. More parameters than a Bengali family has opinions. Images, speech, translation, protein structures, code, medical imaging, language generation: deep learning marched into these territories with the rude confidence of a man who has never tried to get a land record corrected in a municipal office.

The great non-obvious trick of deep learning is representation learning. Earlier systems often needed humans to design features: edges, shapes, ratios, hand-engineered signals, clever variables. Deep networks learn internal representations from data. A model trained on language does not store “meaning” the way a dictionary does; it learns high-dimensional statistical relationships among tokens, contexts, and patterns. This is why Large Language Models [LLMs, deep learning systems trained to predict and generate language-like sequences] can sound uncannily fluent while still hallucinating like a retired uncle with a weak memory and a strong opinion. They have absorbed form and association at colossal scale. That is not nothing. It is also not the same as grounded truth.

The Bayesian school entered with a very Bengali virtue: suspicion. Bayesians do not merely ask, “What is the answer?” They ask, “Given what we already believed, and given this new evidence, how should our belief change?” Bayes’ theorem is the mathematics of updating under uncertainty. In daily life, it is the difference between seeing one cloud and canceling Durga Puja, and seeing the sky turn black over the Hooghly while the wind starts behaving like a drunk electrician. Evidence matters. Prior belief matters. The update matters.

Bayesian AI gave us probabilistic graphical models, Bayesian networks, hidden Markov models, probabilistic inference, uncertainty quantification, and a grown-up attitude toward incomplete information. The Bayesian world is excellent when uncertainty itself is part of the problem. Medical diagnosis, sensor fusion, speech recognition, risk scoring, fraud detection, and causal reasoning all benefit from probabilistic thinking. Its weakness is that exact inference can become computationally nasty, and specifying the structure of the model can require a level of discipline not always found in human institutions, academic departments, or software teams after the third reorganization.

The analogizers are the tribe of similarity. Their basic question is not “What rule proves this?” but “What is this close to?” Nearest-neighbor methods, kernel methods, and Support Vector Machines [SVMs, classifiers that try to separate data by maximizing the margin between classes] live in this neighborhood. An SVM is often explained as drawing a line between categories, but that is a kindergarten version of a more elegant trick. With kernels, the method can act as if the data has been lifted into a higher-dimensional space where separation becomes easier. Very Calcutta, really. If the street is too crowded, imagine a flyover.

Similarity-based AI works because much of intelligence is comparison. This scan resembles prior malignant cases. This transaction resembles fraud. This sentence resembles sarcasm. This patient trajectory resembles a known risk cluster. But similarity is never innocent. Two things can be mathematically close and clinically different, socially different, morally different, or different in the one boring operational way that matters at 2:17 a.m. when the system fails. The analogizer’s curse is that distance is not meaning. A metric is a philosophy smuggled into arithmetic.

The evolutionary tribe looked at nature and said, reasonably enough, that intelligence might not need to be designed top-down. Perhaps it could be bred. Genetic Algorithms [GAs, optimization methods inspired by selection, mutation, and recombination] and Genetic Programming [GP, evolving computer programs or program-like structures] begin with populations of candidate solutions. Some perform better. Some are discarded. Some are mutated. Some are recombined. The process repeats until something useful, surprising, or mildly grotesque emerges.

This is AI as a para tournament organized by Darwin and sponsored by chaos. It can find clever solutions where the search space is too large or strange for tidy reasoning. It can also waste vast effort producing things that work beautifully for the wrong reason. Evolutionary methods are excellent reminders that optimization does not care about dignity. If your fitness function rewards a loophole, the algorithm will find the loophole and dance inside it wearing your best shoes.

Reinforcement Learning [RL, learning actions through rewards and penalties over time] is the school of consequence. An agent acts in an environment. It receives rewards or punishments. Over time, it learns a policy: what to do in which state. This is often formalized through a Markov Decision Process [MDP, a mathematical model where decisions affect future states and rewards]. The glamour version is game-playing AI beating champions. The less glamorous version is every child learning which drawer contains biscuits and which adult contains thunder.

RL is powerful because it handles sequential decision-making. Chess, Go, robotics, recommendation systems, resource allocation, control problems, and automated trading all contain decisions whose value depends on what happens later. Its danger is reward design. Ask the system to maximize watch time and it may discover that irritation is a splendid adhesive. Ask it to reduce cost and it may quietly damage quality. Ask it to win a game and it may behave like genius. Ask it to optimize a society and you should probably hide the matchsticks.

Fuzzy logic is the school for anyone who has noticed that life rarely arrives as true or false. Is this patient “old”? Is this room “hot”? Is this loan “risky”? Is this fish “fresh”? Classical logic wants crisp boundaries. Fuzzy Logic [FL, reasoning with degrees of truth rather than binary truth values] allows partial membership. Something can be somewhat tall, very risky, mildly abnormal, almost acceptable, or suspiciously Hilsa-adjacent.

Fuzzy systems were especially attractive in control systems and decision rules where human categories are graded rather than binary. They are not sloppy logic. They are a formal way of handling vagueness. But they do require carefully designed membership functions, and here again the goblin appears: representation. If the categories are badly designed, fuzzy logic will not save you. It will merely give your confusion decimal places.

Expert systems were the grand old ambition of stuffing expert knowledge into rules. A medical expert system might contain rules about symptoms, lab values, diagnoses, and recommendations. A configuration expert system might guide technical decisions. The dream was noble: capture scarce human expertise and make it available consistently. The reality was more mixed. Expert knowledge is often tacit, contextual, contested, and updated informally. The rule base grows. Exceptions multiply. Maintenance becomes expensive. The expert retires. The system remains, like a dusty almirah full of files nobody wants to throw away because some of them may still matter.

This does not mean expert systems were useless. Far from it. Their descendants live everywhere: decision support, business rules engines, configuration systems, compliance checks, eligibility logic, clinical alerts, fraud flags. The lesson is subtler. Rules work best where the domain is stable, well-governed, and semantically explicit. They fail where reality is fluid, incentives are bent, or the organization cannot agree what its own words mean.

Probabilistic programming is what happens when probability and programming languages have a thoughtful child who grows up reading too many statistics papers. Instead of writing a fixed statistical model by hand, one writes a program that describes a generative process, and the system performs inference. This can be elegant because many real processes are easier to describe as “how the data might have been produced” than as a direct formula for prediction. It is also computationally demanding and intellectually unforgiving. A probabilistic program can look beautifully transparent while hiding a swamp of assumptions under the floorboards.

Swarm intelligence watches ants, bees, birds, and other collective creatures and says, “What if intelligence is not inside one head but distributed across many simple agents?” Ant Colony Optimization [ACO, search inspired by ants laying and following pheromone trails] and Particle Swarm Optimization [PSO, optimization inspired by flocking and collective movement] belong here. The charm is obvious. No single ant understands the whole colony. Yet the colony finds food, builds structure, adapts, persists. Anyone who has watched a Bengali wedding function knows that swarm behavior can produce both astonishing coordination and complete logistical ruin.

Neuro-symbolic AI is the attempted ceasefire between the old symbol clerks and the neural weight-adjusters. The idea is simple to state and hard to do: combine neural learning with symbolic reasoning. Let neural systems perceive, classify, retrieve, and generalize from messy data; let symbolic systems reason, constrain, explain, and manipulate structured knowledge. This is attractive because the weaknesses are complementary. Neural systems are flexible but opaque. Symbolic systems are interpretable but brittle. A good hybrid could, in theory, learn from the world without forgetting how to reason about it.

The catch is that “combine them” is doing the work of three doctoral programs and a nervous breakdown. Neural representations are continuous, distributed, and statistical. Symbolic representations are discrete, compositional, and explicit. The bridge between them is not a decorative connector. It is the central engineering problem. If solved well, it could matter enormously for scientific reasoning, medicine, law, engineering, and any domain where both pattern recognition and explicit constraints matter. If solved badly, it becomes two fragile systems duct-taped together and marketed as a breakthrough.

Explainable AI [XAI, methods for making AI behavior understandable to humans] emerged partly because black boxes started making consequential decisions. A model that recommends a movie can be mysterious. A model that denies care, flags a student, predicts recidivism, rejects a loan, or misreads a tumor must answer harder questions. Why this output? Which features mattered? Would a small change alter the decision? Is the explanation faithful to the model, or merely a polite fairy tale for regulators?

XAI is not a garnish. It is a governance requirement when systems enter high-stakes environments. But explanation has layers. A mathematically faithful explanation may be useless to a clinician. A simple explanation may be false. A local explanation may not reveal global behavior. A model card may document intent without revealing operational misuse. In Calcutta terms, saying “the tram was delayed because of congestion” may be true, but it does not tell you why the same congestion has become the city’s unofficial transport policy.

Quantum Machine Learning [QML, attempts to use quantum computing ideas or hardware for machine learning] is the tribe that arrives wearing difficult mathematics and an expression of cosmic grievance. Some of it is serious research. Quantum computing may eventually help with certain optimization, simulation, sampling, or linear algebra problems. But much of what passes through public discourse is fog wearing a lab coat. The hard question is not whether quantum sounds impressive. It does. So does a Sanskritized government notice. The question is whether a quantum method provides practical advantage over classical methods for a real workload under real constraints. Often, the answer is still: ask again later, preferably with less incense.

Artificial General Intelligence [AGI, a hypothetical AI with broad human-level or greater capability across many domains] is less a school than a weather system. It pulls in philosophers, engineers, investors, futurists, doomers, boomers, monks of probability, and people who would previously have started cults but now have podcasts. The AGI debate is not silly, because increasingly general systems do raise serious questions about autonomy, labor, safety, power, and institutional control. But the debate often becomes theatrical because nobody agrees on the measuring stick. General intelligence is not one thing. It is reasoning, embodiment, memory, transfer, planning, social understanding, grounding, self-correction, and adaptation across hostile novelty. A model may be astonishing at language and still not know what a wet staircase feels like under a nervous foot.

The large model era has made the tribal map messier. Modern AI systems are rarely pure members of one school. An LLM may use deep learning for training, reinforcement learning from human feedback for alignment, retrieval systems for external memory, symbolic tools for calculation, Bayesian evaluation ideas for uncertainty, and XAI methods for inspection. The old tribes now live inside pipelines. The neural net generates. The search system retrieves. The rules filter. The database stores. The governance layer panics. The user blames “AI,” as if one ghost did the whole thing.

The crucial distinction, often missed, is between representation and intelligence. A system can be very good at transforming representations without understanding the world in the human sense. It can map text to text, image to label, signal to prediction, state to action. That is powerful. But the map is not the territory, and the embedding is not the thing embedded. A Bengali knows this from food. You may possess the recipe, the photograph, the restaurant review, the calorie table, the supply chain record, and the invoice for the fish. None of these is the taste of mustard Hilsa arriving dangerously hot on a plate.

Many AI failures are representation failures mislabeled as model failures. The data does not contain the reality people think it contains. The labels are noisy. The categories are political. The measurements are proxies. The workflow that created the data has bent it. The absence of evidence becomes evidence of absence. The system learns the organization’s habits and mistakes, then returns them with mathematical confidence. This is why a model trained on institutional data can become a mirror with a management degree: accurate in places, distorted in others, and extremely pleased with itself.

The practical implication is plain. Before asking which AI tribe to join, ask what kind of problem you actually have. If the domain is governed by explicit rules, symbolic systems may be appropriate. If the domain is noisy and perceptual, neural methods may shine. If uncertainty is central, Bayesian methods deserve respect. If the problem is sequential and reward-driven, reinforcement learning may fit, though with caution and adult supervision. If the domain requires both perception and reasoning, neuro-symbolic approaches may be worth the pain. If human consequences are serious, explainability and provenance cannot be bolted on afterward like a balcony added to an unsafe building.

The clean solution is prevented by the oldest villain in computing: the world. Data is partial. Institutions lie to themselves. Incentives deform measurement. Humans invent workarounds. Labels drift. Regulations lag. Vendors oversell. Researchers simplify. Production systems decay. The model is only one animal in the zoo. The pipeline, the users, the data-generating process, the feedback loops, and the governance machinery all matter. AI does not escape architecture. It exposes it.

So the Calcutta Bengali’s guide is this: treat every AI school as a temperament, not a religion. The symbolists bring grammar. The neural crowd brings instinct. The Bayesians bring doubt. The evolutionary people bring mutation and mischief. The reinforcement learners bring consequences. The fuzzy logicians bring graded reality. The expert systems bring institutional memory. The swarm people bring collective behavior. The neuro-symbolic crowd brings uneasy diplomacy. The quantum people bring possibility and fog. The explainability people bring the necessary irritation of accountability. The AGI people bring apocalypse, salvation, venture capital, and occasionally a useful question.

Use them all carefully. Mock them when needed. Respect them when earned. And never believe anyone who says one method has solved intelligence. That person has either not understood intelligence, not understood method, or has recently raised funding.